ATO Copilot and the Compliance Gap in Agentic Software

Published:

At c0mpiled-10/DC: AI for Government, I built ATO Copilot, a four-hour hackathon prototype for source-backed authorization support. It won 1st place, but the more interesting part was the question it explored.

Can AI help government teams move through authorization and compliance workflows by grounding every recommendation in source evidence?

ATO is one instance of a broader pattern: high-trust workflows where messy evidence has to become structured reasoning before a human can make an accountable decision.

That question matters more now because software development is accelerating faster than compliance is.

Agentic coding tools are changing the rate at which software can be produced. A small team can now generate features, tests, infrastructure changes, documentation, and pull requests at a tempo that would have looked unrealistic a few years ago. The bottleneck is shifting away from writing code and toward proving that the resulting system can be trusted.

In high-trust environments, that proof burden does not disappear. It compounds.

Every system change creates questions:

- What changed?

- Which controls are affected?

- What evidence supports the claim?

- What gaps remain?

- What would a reviewer ask?

- Which risks are acceptable, and who accepted them?

Human-driven compliance workflows were already strained when software moved at human speed. They become structurally mismatched when software starts moving at agentic speed.

The issue is not that humans are unnecessary. It is that human review capacity becomes the scarce resource. If AI can generate more software, more configuration, more infrastructure, and more documentation, then organizations also need systems that can generate more traceability, more evidence mapping, more control awareness, and more reviewer-ready context.

Otherwise, the future looks like this:

- Agentic engineering increases delivery velocity.

- Compliance teams receive more artifacts, more diffs, and more systems to review.

- Manual evidence collection and control mapping become the bottleneck.

- Authorization queues get longer.

- Teams route around the process or slow down to survive it.

Neither outcome is acceptable.

The right answer is not to remove humans from authorization decisions. The right answer is to give humans better instruments.

What ATO Copilot does

ATO Copilot is a prototype for source-backed authorization support.

The demo ingests synthetic evidence artifacts and produces structured control analysis. It focuses on a few concrete tasks:

- mapping evidence to relevant control families

- surfacing likely reviewer questions

- identifying gaps or weak claims

- recommending next actions

- keeping outputs traceable to source material

The important design choice is that the system is not a generic chatbot.

For example, if an evidence artifact claims that audit logging is enabled, a useful system should not simply repeat that claim. It should map the claim to the relevant control area, quote the exact source text, identify whether retention or access review evidence is missing, and suggest what a reviewer should inspect next.

A blank chat interface asks the user to know what to ask. A compliance workflow needs the opposite: it should shape messy evidence into reviewer-ready work products. The interaction should start with artifacts and produce structured analysis, not start with a prompt box and hope the user interrogates the system correctly.

That is the difference between AI as an answer machine and AI as workflow infrastructure.

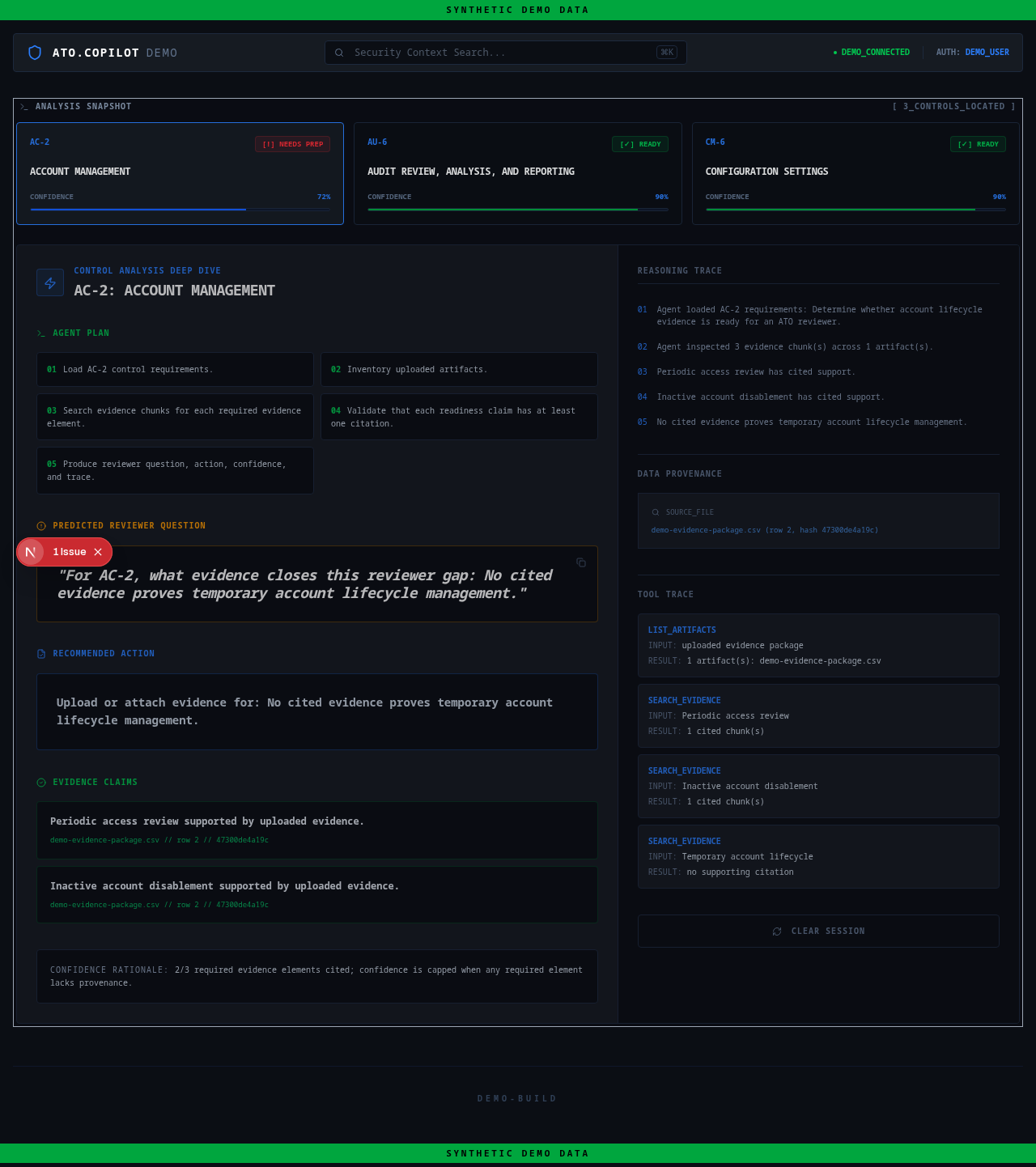

ATO Copilot demo interface using synthetic evidence. The screenshot shows source-backed control analysis, reviewer questions, recommended actions, and provenance traces.

Why this matters

Authorization, compliance, audit, procurement, grants, and program review workflows all share the same core pattern:

messy evidence → structured reasoning → accountable decision

Frontier models are good at language and synthesis, but high-trust environments need more than plausible summaries. They need systems that can explain where claims came from, what evidence supports them, what remains uncertain, and what a responsible human should inspect next.

This becomes more urgent as agentic coding changes the economics of software production.

If one engineer can produce ten times more system changes, the review surface expands. If multiple agents can modify code, infrastructure, tests, and documentation in parallel, the evidence surface expands again. The compliance function cannot scale by asking humans to read every artifact manually at the same depth and speed.

That does not mean compliance should become fully autonomous. It means compliance tooling needs to become evidence-native.

A useful system should be able to answer:

- Here is the evidence we found.

- Here is the control or requirement it appears to support.

- Here is the exact source text behind that mapping.

- Here is the part that looks weak.

- Here is the likely reviewer objection.

- Here is the next action a human should take.

This is the kind of work AI should do in government and regulated environments: compress the evidence surface, preserve traceability, and raise the quality of human judgment.

The product thesis

Most government AI demos begin as chat over documents. That can be useful, but it is not enough for high-trust workflows.

The stronger pattern is workflow-native AI:

- source-backed

- auditable

- constrained

- artifact-driven

- embedded in real approval paths

- designed around trust, not just speed

ATO Copilot is an early exploration of that pattern.

The prototype does not claim to automate authorization decisions. It does not contain CUI, customer data, or official assessment output. All demo evidence is synthetic.

The goal is narrower and more useful: show how AI can turn evidence artifacts into traceable control analysis and reviewer-ready next steps.

The broader shift

As software becomes more agentic, governance has to become more computational.

Not bureaucratic. Not performative. Computational.

That means policies, controls, evidence, approvals, and risks need to be represented in ways machines can help reason over while humans remain accountable for judgment.

The teams that figure this out will move faster without lowering trust. The teams that do not will face a growing mismatch between how quickly systems can be built and how slowly they can be approved.

That is the gap ATO Copilot is pointed at.

The prototype is public here: https://github.com/EthanHNguyen/ato-copilot

It uses synthetic evidence only and is intended as a product/architecture sketch, not an operational authorization system.